Whenever I’m delivering conferencing training sessions, the question ‘if one acoustic echo canceller (AEC) is good, won’t two AEC’s be better?’ is asked.

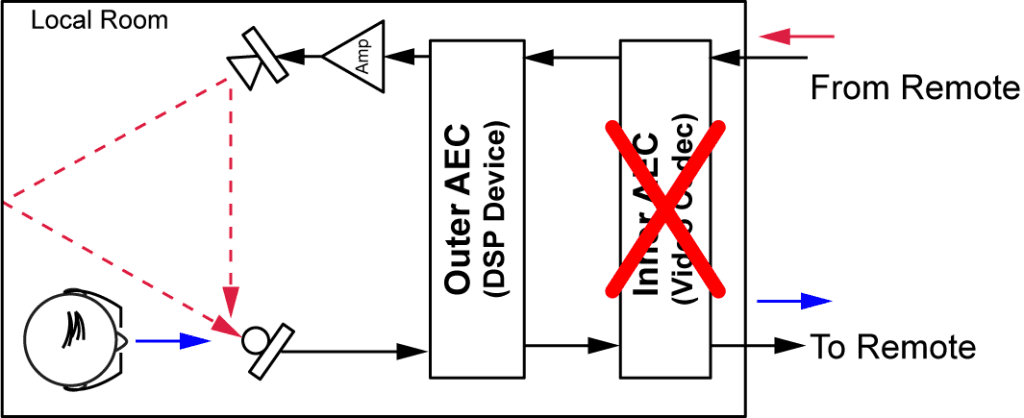

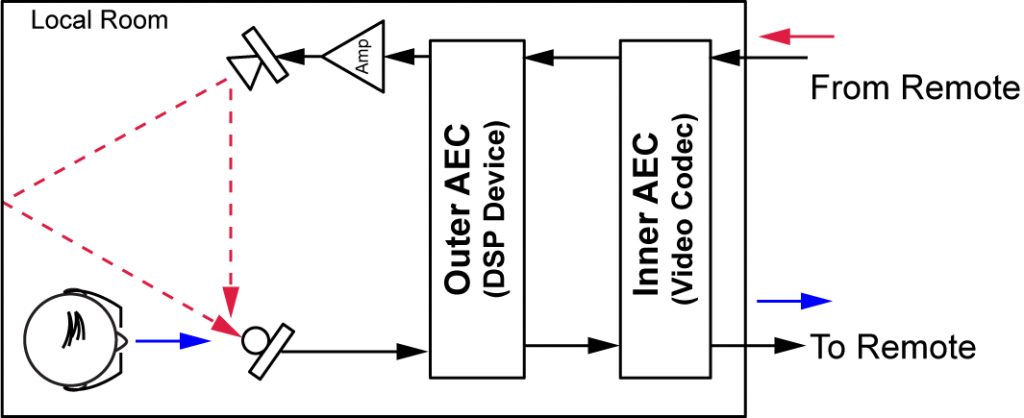

It’s a good question as it goes to the heart of how acoustic echoes are modeled and how an AEC works. This question typically comes up when installer/designers use an ‘Outer AEC’ such as an audio DSP with AEC from Polycom, ClearOne, Biamp, etc., and connect the DSP system to a video codec that also has an built-in AEC (‘Inner AEC’) as shown in the following figure.

The short answer to the question of whether two AEC’s are better than one is No. One AEC is good, two AEC’s are bad.

How can this be?

It comes down to how AEC’s operate and assumptions made while adapting and removing echoes, particularly with any non-linear processing that is in the signal chain.

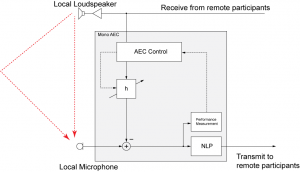

To start with, see this earlier post about how AEC’s work. The short version is that AEC’s make a linear model of the room and subtract the modeled echo from the actual audio picked up by the microphone signal as shown in the figure below.

Any residual signal after the subtraction of the linear room model (output of h) from the microphone signal passes through a non-linear processing (NLP) block that effectively suppresses the errors that the AEC linear model can’t subtract out due to changes in the room or imperfections in the linear and time-invariant model of the AEC.

In the case of a DSP audio processing device in the room with AEC enabled with a video conferencing system where the AEC on the video codec is also enabled, the Outer AEC will remove the echo of the From Remote signal and may also perform some non-linear processing (NLP). This NLP processing block can cause issues for any ‘inner’ AEC because the Inner AEC will see the reference signal (From Remote) but won’t see any echo picked up at its input except for residual errors from the outer AEC. This means that the inner AEC doesn’t really get a chance to adapt to the room because there’s isn’t enough signal to adapt. In addition the outer AEC device may be performing other intentional non-linear processing such as compression, automatic gain control, noise suppression, and other processing that will violate the AEC’s linear time invariant operating model of the Inner AEC, further confusing the inner AEC processing assumptions.

Because of the Outer AEC processing the Inner AEC may introduce other artifacts to the To Remote signal if it decides to apply its own NLP. The result is additional processing on the transmit signal ‘To Remote’ that reduces the overall audio quality of the signal.

Also, the Outer AEC may be performed on multiple microphones and then those microphones are summed together via an automatic microphone mixer (non-linear processor) to create a single feed to send to the remote participants. This single feed could have various different residual echo signals present that would further confuse the Inner AEC.

If the previous reasons didn’t convince you that two AEC’s are bad, consider that most developers of video conferencing systems (not to mention the DSP audio device developers) don’t test their systems this way meaning there could be other artifacts that are added by the Inner AEC trying to compensate for the Outer AEC such as applying gain or suppressing other signals.

The final result is that two AEC’s are worse than one, so make sure you disable the Inner AEC on your video codec if you are using a DSP with AEC processing. The outer AEC does all the ‘heavy audio lifting’ and the inner AEC should be turned off!